The rise of nudifying tools and their threats to children

4 November, 2025

Dr Shaunagh Downing, AI Lead

Content warning | This blog post discusses child sexual abuse and exploitation, including child sexual abuse material (CSAM).

The use of AI tools to generate non-consensual intimate images (NCII) of real people is a widespread and escalating threat, particularly when these tools are used to produce child sexual abuse material (CSAM). Exacerbating the issue is the mainstream accessibility and promotion of ‘nudifying’ services – apps and websites used to turn ordinary photos of someone into nude and sexualised depictions.

Here, we explore the worrying rise in nudifying apps and websites and AI-facilitated abuse, the harms they’re causing to children, and the urgent need to address the ecosystem that enables them.

The evolution of AI-facilitated abuse

Abusive AI apps and websites have existed for many years in the form of ‘face swaps’ – often superimposing the faces of women and girls into existing pornographic images and videos.

By 2023, with the rise of generative AI for image generation, powerful open-source diffusion technologies like Stable Diffusion made it possible for perpetrators to create new images from scratch or modify existing images with a high degree of realism.

This led to the development of nudifying apps and websites. These are built on top of open-source diffusion technologies and allow users to upload a photo of anyone, without consent or age checks, and receive back a realistic nude version of that image. The existence, accessibility, and ease of use of these nudifying services has created a world where anyone has the power to create abusive and damaging images of others with the push of a button.

The evolution of abusive AI tools has not stopped there. As generative AI for images continues to become more powerful, many nudifying services can now create images that depict real people in different sexual positions. In addition, with recent advances in generative AI for video, any identity can be inserted into a completely generated explicit video.

Nudifying apps – an escalating threat to children

Nudifying tools modify images of people to ‘remove’ their clothing and depict them nude. There are no legitimate use cases for this type of technology; it exists only for the creation of non-consensual material, and yet it doesn’t lurk in the shadows. These tools are widely available and actively promoted across mainstream platforms.

Children are also being exposed to this technology – with AI-created nude images now affecting more than half a million children in the UK alone. These experiences vary from sending or receiving an AI nude, to using the technology to create one themselves, to being depicted in this kind of material. This highlights a complex dynamic in which children can be both victims and perpetrators of AI-facilitated abuse.

What’s driving the growth of this technology? We consider two factors here – its accessibility, and the broader ecosystem that enables and profits from it.

The accessibility of nudifying technology

Nudifying technology is extremely accessible. Anyone with an internet connection and a single image can use nudifying technology to create abusive and harmful material.

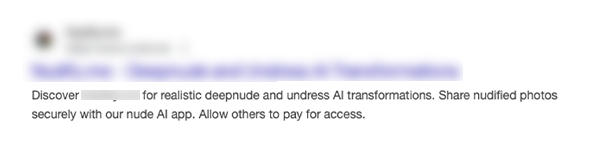

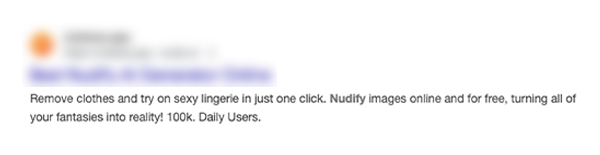

Most apps and websites require no technical knowledge to use and are easily discoverable through everyday channels. In a study by Thorn, 71% of teens who had used or encountered nudifying technology said they discovered it through social media. 53% discovered the technology through search engines and 70% accessed it through their device’s app store. As can be seen from the redacted screenshots below, nudifying services are not hidden on the dark web, but are existing in plain sight.

It is therefore no surprise that nudifying websites attract significant traffic, with a study of 85 nudify websites by Indicator calculating an average of 18.5 million monthly visitors to each.

The ecosystem enabling nudifying technology

Nudifying services don’t exist in isolation. They are enabled by a wider ecosystem of mainstream technology providers in order to function, scale and monetise.

A 2024 study found that mainstream payment services, including PayPal, Patreon, Apple Pay, credit card companies, and others, facilitate monetisation of these services. As a result, nudifying services have become a highly lucrative business. One study estimated they may be making up to $36 million per year.

The inclusion of nudifying apps and websites in app stores (such as Apple’s) and search engine results (such as Google), as evidenced in the Children’s Commissioners 2025 report, not only makes this technology easily available to a wide audience and helps to extend their reach, but it lends a sense of legitimacy to users seeking it out.

Furthermore, this harmful technology is actively promoted. Various social media companies have allowed nudifying services to buy advertisements on their platforms. Some reports have shown that platforms such as Instagram have been advertising nonconsensual AI nudify apps to users, with taglines such as “Undress any girl for free”. In fact, an analysis of one nudifying service in December 2024 by Faked Up found that 90% of its traffic came from Meta-owned platforms.

Infrastructure providers also play a critical role in supporting nudifying tools. Investigations from Indicator Media show that Amazon and Cloudflare provide hosting or content delivery services for dozens of nudifying sites, while Google has enabled single sign-on in numerous cases.

Big Tech companies are amplifying this harmful technology, expanding its reach, enabling its profit model and legitimising its existence, while themselves generating revenue from its promotion.

How does nudification technology threaten children?

The rise of nudifying apps is affecting children in myriad ways, from the psychological harm of being victimised, to the intersection of AI-facilitated abuse with other forms of online and in-person abuse.

Here, we explore some of these impacts in more detail.

Psychological and emotional impact

For some people, there are misconceptions about the impact of nudifying technologies and AI-facilitated abuse in general. Because the content is generated by an algorithm, some people believe that it is victimless, and therefore harmless.

However, survivors of this kind of abuse have continually described the emotional, psychological, and social harms caused by being depicted in synthetic non-consensual intimate material. This includes the deep feelings of shame and fear they experience, alongside a loss of autonomy. As Elliston Berry describes:

“I was just a 14-year-old girl, and everyone is seeing my body, and even though it’s not my body, it has the same shame and the same guilt as a real photo would have."

Although misconceptions persist, a report by Thorn found that young people overwhelmingly recognise these harms, with 84% of young people believing that AI-created nude images are harmful to those depicted. Many young people describe the violation as lying not in how the content is created, but in its very existence and consumption.

Similarly, Internet Matters found that the majority of teenagers believe it would be worse to have an AI‑generated nude shared of them than an authentic photograph. The reasons for this include:

- A complete lack of autonomy

- The fact that the image can be manipulated to make the victim appear in any way the perpetrator wants

- The potential lack of awareness of the image

- The anonymity of the perpetrator

- Fears that family members, teachers or peers might believe that the image is authentic, due to the realism of the images.

When a child, or anyone, is depicted in non‑consensual sexual material, regardless of whether the content is ‘synthetic’, the harm is real and deeply felt.

Gendered impacts and school‑based abuse

Since AI-facilitated abuse has existed, it has disproportionately affected girls and women. 98% of the early face swap-type videos online were non-consensual (so-called ‘deepfake pornography’) and 99% of those targeted were female. More recent research confirms that abusive AI technology is still mainly targeted at women, with 95% of nudifying websites studied focusing explicitly on women and girls.

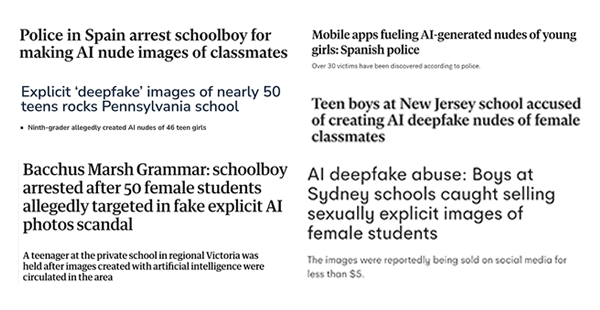

These biases have particularly affected teenage girls, where school-based AI-facilitated abuse has been reported around the world. From Europe to North America to Australia, there have been repeated reports of male minors creating, distributing, and even selling AI‑generated nude images of their female peers. In many cases, dozens of girls are victimised in each school.

To further the harm caused by these incidents, young people don’t feel supported in the aftermath. Only 36% of female students felt their school could effectively support victims of synthetic NCII.

The harm extends beyond those directly depicted. A student at Beverly Vista Middle School expressed the anxiety many girls now feel:

“It’s very scary, because people can’t feel safe to, you know, come to school. They’re scared that people will show off, like, explicit photos.”

Nudifying technology not only reinforces misogyny, but it enables harassment and bullying in school environments, leaving girls vulnerable to abuse and afraid in spaces where they should feel safe.

The increased threat of sexual extortion

Nudifying technology has also become a tool for sexual extortion (or ‘sextortion’), a crime that is surging globally. The Internet Watch Foundation recently reported that sextortion cases have risen by 72% in a single year.

Sexual extortion often involves criminals tricking children and young people into sending nude or sexual images of themselves, which are then used to blackmail them into sending money or more explicit content. But with nudifying technology, offenders no longer need to coerce children to send genuine intimate images to exploit them. They can simply create those images with AI. A recent study by the Australian Institute of Criminology found that 41% of adolescents who were sexually extorted said the material used to extort them had been manipulated.

Because these AI images can be produced quickly and in large volumes, nudifying tools may be used to scale extortion attacks, potentially overwhelming victims and law enforcement, and increasing the likelihood of children being hurt.

The intersection of nudifying technology and sexual extortion demonstrates how AI is accelerating forms of abuse that were already among the most serious threats to children’s safety.

To learn more, read: How is AI changing the scale and scope of online enticement?

Looking ahead to a safer world for children

Preventing AI-facilitated abuse requires intervention across the technology ecosystem.

Crucially, the companies responsible for developing generative AI models must consider how their technologies are being misused. To counteract these potential threats, they must embed Safety by Design principles throughout the development of any and all future AI technologies.

Responsibility also lies with the platforms hosting and promoting these tools. These companies must proactively remove any nudifying services listed on their app stores, search engines, and social media platforms to prevent their spread and use.

Policymakers also have a vital role to play. Companies should face meaningful consequences when they fail to prevent the monetisation and amplification of abusive AI tools, which relies on robust regulations to ban nudification apps and their promotion.

Read more: AI nudification: how do we combat AI-enabled NCII abuse?

If you have been affected by non-consensual material, the following organisations and resources are available for support:

Child Safety Online [Free report]

To continue learning about the threats facing children today, including the rise of nudifying apps, consider reading our Child Safety Online Report next. In this report, we explore the risks of AI, online gaming platforms, and the dark web, alongside the challenges facing investigators of crimes against children.